The Quest for a Universal Translator for Old, Obsolete Computer Files

To preserve bygone software, files, and more, researchers are working to emulate decades-old technology in the cloud.

Not so very long ago, web designers’ ambitions outstripped the infrastructure of the internet, so they had to resort to physical media to help carry their ideas. Dial-up modems were pokey, and the sluggish speed couldn’t handle large images or streaming video. “People did all sorts of projects that were too heavy for the live web,” says Tim Walsh, a digital archivist at the Canadian Centre for Architecture (CCA).

One workaround to make these projects possible was to separate a website from the web. “A simple solution was to simply burn all the the HTML, JavaScript, and other large files to a CD-ROM,” Walsh says. These sites spent much of their lives offline—viewable only when a user met the hardware requirements listed by the creator and then inserted the CD. Today, with a decent connection, online video and all sorts of other functionality rarely stumbles. In the march to high-speed wi-fi, browser-based applications, cloud computing, and computers that have no need for CD-ROM drives, some older digital artifacts have been left in the dust. In some cases, it’s as though they’re written in a dead language, so accessing their content can be tricky.

Take the CCA. It has hundreds of thousands of items in its collection, from 16th-century writings on military architecture, to 1,000-plus titles about theater and stage design, to extensive archives of exclusively digital material made between 1980 and 2000. Of the digital assets, roughly 70 or 80 percent are relatively accessible with today’s computers and software, Walsh estimates. They can be obscured in a number of frustrating ways. Some are orphaned because they were made with software that’s now extinct. Others might have been left incompatible by years of updates. Still more may have been created using expensive, specialized, niche software—such as the programs used by special effects studios or video game designers—that’s simply not widely available. In these instances, the databases that the Centre consults might not even be able to identify the file format or the software it came from. When a file or digital project is particularly incorrigible, Walsh often finds himself rigging up custom solutions or calling in favors.

For years, many architects and other designers have used 3-D modeling software called form·Z. The software, Walsh explains, was especially popular for rendering cutting-edge projects in the 1990s and 2000s. Each new release tends to only support files created within the last two versions, meaning that form·Z 8.5 Pro, the current version installed on CCA’s CAD workstations, can’t wrangle decades worth of files created in older versions. “CCA’s archives contain tens of thousands of files created in form·Z versions 1 to 4, which are almost universally inaccessible” with the solutions at hand, Walsh says.

When CCA wanted to display some of these files for an exhibition, Walsh says, “a friend-of-a-friend-of-a-friend” came to the rescue. An architect who had been working with form·Z for decades happened to have some of the earliest versions still installed on various computers. “He was able to take a handful of files … and piggy-back them from version 4, to version 5, to version 6, and so on until they were in up-to-date file formats that can be opened in form·Z 8.5 Pro, and then even exported into other file formats if necessary,” Walsh says. The solution worked, but it was a clunky chorus: open, save, close, open, save, close, open, save, close.

The digital world continues to expand and mutate in all sorts of ways that will orphan and otherwise impair file formats and programs—from ones long forgotten to ones that work just fine today but carry no guarantees against obsolescence. Instead of a patchwork of one-off solutions, perhaps there’s a better way to keep old software running smoothly—a simpler process for summoning the past on demand. A team at the Yale University Library is trying to build one.

Digital archivists deal with least two broad categories of artifacts. There are analog objects or documents scanned into a second, digital life—digitized maps, for instance, or scanned photos. The other objects are natives of the digital world. These files can include everything from a simple compressed image to a game on a CD-ROM to a CAD design for a skyscraper. The relentless march of new versions and new platforms makes obsolescence a constant presence, from as soon as digital objects are conceived.

The challenges Walsh has faced are familiar across the field of software preservation, says Jessica Meyerson, who works with the Software Preservation Network (SPN), a consortium that tackles issues surrounding maintaining digital objects. “SPN got together to say, ‘This is absolutely a challenge, and we’re already dealing with proprietary objects that are not as simple as text files,’” Meyerson says.

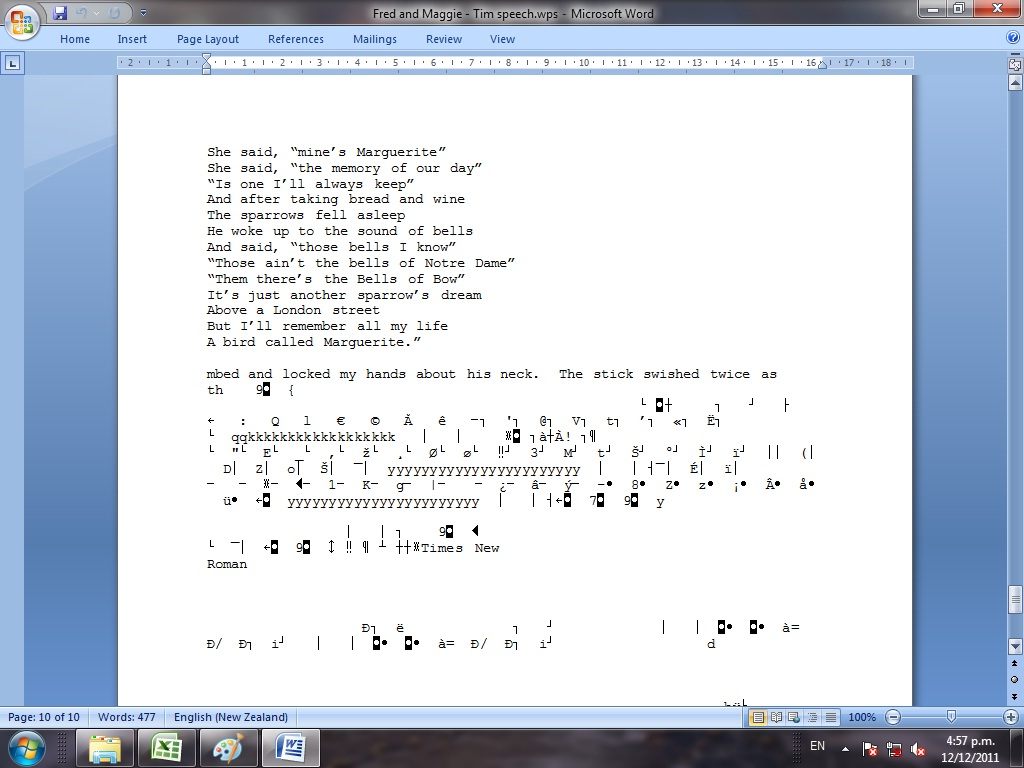

These problems evolve as the complexity of a digital file increases. If you have a text or word processing document from the early 1990s, for example, you can probably view its contents with little difficulty. The formatting and fonts might be wonky, as Euan Cochrane, then with Archives New Zealand, demonstrated with a project called “Visual Rendering Matters,” side-by-side comparisons of how formatting can go on the fritz. When a Microsoft Works 4.0 document was opened in Word 7.0, for instance, sentence fragments crept in. (Researchers speculated that they may have been deleted from the earlier version, but still stored somewhere in the file.) Hodgepodges of arrows, numbers, and letters with diacritical marks splashed across the bottom, too, but the key material was still more or less intact. “Basically, there’s a way to get you that data because it’s a less-complex format,” Meyerson says.

The more layers or metadata that a file has, the more tangled this process becomes. In a many-layered CAD file, Meyerson says, “we have no idea what this is until we render it in the original software.” Digital architecture, design, and engineering assets present such a challenge that they were the subject of a symposium at the Library of Congress last fall.

To access these complicated files, or to launch some of the sites that lived on CD-ROMs (which may need a certain operating system, browser, or other requirements to open), a user might rig up an emulation environment. An emulator is a proxy: It recreates older hardware and software on a modern-day machine. On occasion, Walsh has made some himself.

When one CCA visitor wanted to take a look at a CD-ROM-based “multimedia website” produced in conjunction with a 1996 exhibition of work by the architect Benjamin Nicholson, Walsh needed to wind back the clock. He tracked down an old license for Windows NT and installed Netscape Navigator and an old version of Adobe Reader. This all enabled decades-old functionality on a two-year-old HP tower.

This strategy works, but it has drawbacks. “These environments are time-intensive to create, will only run on a local computer, and they typically require a lot of technical know-how to set up and use,” Walsh says. Ad hoc emulation is not for the novice or the busy.

Researchers at Yale are working to solve this problem by creating a kind of digital Rosetta Stone, a universal translator, through an emulation infrastructure that will live online. “A few clicks in your web browser will allow users to open files containing data that would otherwise be lost or corrupted,” said Cochrane, who is now the library’s digital preservation manager. “You’re removing the physical element of it,” says Seth Anderson, the library’s software preservation manager. “It’s a virtual computer running on a server, so it’s not tethered to a desktop.”

Instead of treating each case as a one-off, like digital triage, this team wants to create a virtual, historical computer lab that’s comprehensive and ready for anything. Do you have a CD-ROM that was once stuffed in a sleeve on the cover of a textbook? A snappy virtual machine running Windows 98 might be able to help you out. “We could create any environment that we needed,” Anderson says. The goal is to build an emulation library big enough that there’s a good fit for any potential case—with definitive, clear results. Cochrane said the integrity should be strong enough that “you can take an old digital file into court as evidence.” Walsh says it should be unassailable; one should be able to stake her dissertation on it.

But before the project can reach that phase, there’s a lot of work to be done by hand. To recreate environments, the team needs hard copies to work from. It’s a bit like an archaeological expedition, an excavation that produces a specimen collection that can be sorted and stored. Over the last few years, the library has been acquiring a collection of “legacy computers.” Researchers scour eBay for desktop PCs from the 1990s, neon-shelled iMacs, and other machines that have long since vanished from the market. They clean up the hard drives, leaving nothing but the original operating system. The next step is to create a disk image of hard drive, copying everything—its data, its processing systems, its quirks—to a virtual replica. “Once that’s set up, you can launch it in an emulated environment,” Anderson says.

So far, the researchers have copied thousands of CD-ROMs from the library’s collection: some games, diagrams and drawings from a Dutch shipbuilder, and a bunch of scientific reporting. (Scientists could consult old data sets to reproduce and validate or contest research findings, Anderson explains, in a way they’ve rarely been able to before.) Next up is a batch for the Yale Divinity School.

It’s not all clear sailing. Legal issues manifest, like a bug in a program. “Copyright is where the bulk of the questions are,” says Brandon Butler, the director of information policy at the University of Virginia Library. Under the auspices of the Association of Research Libraries, Butler and a handful of collaborators are in the midst of a two-year project to assess and codify best practices for old digital material, especially as they apply to licenses and fair use. The team interviewed 40 people—primarily folks working in archives, libraries, museums, and other cultural heritage institutions—for a preliminary report released last month. In those conversations, licenses emerged as “a big source of heartburn,” Butler says.

When you open a piece of software, there’s often a long, jargon-packed license full of stipulations. “If you take that at face value, that would be game over, basically,” Walsh says. Butler notes that, while there’s “an ambient fear of licenses,” these documents can actually be more flexible than people realize. That language about what is and isn’t authorized is “kind of squishy,” Butler adds. “If they don’t very clearly and explicitly forbid you from making fair uses, then courts are not going to construe them to do that.”

These documents don’t show any particular foresight—and why would they—about how much the digital landscape has shifted and how important emulation has become. Plus, “the things that preservation people do are things that no software vendor would do,” Butler says. “They’re filling a gap, and an important social function.” The terrain is still new. Butler and his collaborators plan to release a draft of some guidelines in the fall.

Since this emulation environment the researchers at Yale are creating will just require a (modern) web browser, archivists can tinker with everything on the periphery. They could, for example, tuck the emulator on a new computer tucked inside an old PC case with a traditional CRT monitor, if someone were perhaps studying the aesthetics of the old interface—including the feeling of a rounded, pixelated screen and a whirring beige tower sitting on the floor.

Anderson hopes to launch the first portion of the project in the spring or early summer. (It’s slated to be completed by June 2020.) The browser-based emulation won’t be available to the general public, but will be accessible in the Yale library and distributed among partners at the SPN.

For certain formats, Walsh says, the idea of on-demand emulation is “a godsend.” Compared to rigging up ad hoc workstations, at least, it can save a lot of time. These portals to the past just may end up preserving the sanity of digital archivists as much as anything else.

Follow us on Twitter to get the latest on the world's hidden wonders.

Like us on Facebook to get the latest on the world's hidden wonders.

Follow us on Twitter Like us on Facebook